I recently got a request to write an automation to check the warranty status of all our computers. Simply request, huh?

I decided the best way was to write a script that would export all the assets from our Freshservice instance and get the serial number of them. The next stage is to write a script that actually checks the warranty status with HP, but currently their warranty API is offline so that will come later. Right now we’re only exporting the assets and getting the serials from Freshservice.

You will need to find your API key in your profile for Fresh, and your instance URL which you already should now. The next thing to check for is if your ID for serial number is the same, in our case it’s “serial_number_26000476017” but I guess that may vary.

#################################################

# Export all assets and get serial to check with HP warranty

#################################################

# API Key

$FDApiKey="XXXXXX"

#################################################

# Prep

$pair = "$($FDApiKey):$($FDApiKey)"

$bytes = [System.Text.Encoding]::ASCII.GetBytes($pair)

$base64 = [System.Convert]::ToBase64String($bytes)

$basicAuthValue = "Basic $base64"

$FDHeaders = @{ Authorization = $basicAuthValue }

[Net.ServicePointManager]::SecurityProtocol = [Net.SecurityProtocolType]::TLS12

# The Doing part

$FDAssetData = ""

$Output = @()

$i = 1

do

{

$FDAssetDatatmp = ""

$FDBaseEndpointSummary = "https://<your fresh URL>/cmdb/items.json?page=$i"

# You may want to write this out to check how many pages it loads

# write-host $FDBaseEndpointSummary

$FDAssetData = Invoke-WebRequest -uri $FDBaseEndpointSummary -Headers $FDHeaders -Method GET -ContentType application/json | ConvertFrom-Json

$Output = $Output + $FDAssetData

$i++

} while($FDAssetData)

Write-host -foregroundcolor Cyan "$i pages imported..."

#Now let's go through every row and only filter out the HP computers and get the serial number.

foreach($Outputrow in $Output)

{

$name = ""

$serial = ""

$name = $Outputrow.name

# This levelfield for serial number may not be the same for everyone, please check this up!

$serial = $Outputrow.levelfield_values.serial_number_26000476017

# Using the example of HP here

if($Outputrow.product_name -like "HP*")

{

write-host -foregroundcolor Green "Here you can check warranty for $name with serial $serial..."

}

else

{

write-host -foregroundcolor Yellow "Asset $name is not HP computer."

}

}

Credit goes to Mark Wilkinson for writing the basics of this in a forum post here.

Here is a script I had to put together to remove all e-mail addresses containing a specific domain (“contoso.com” in this example) on all your objects in your on-prem AD.

Naturally this script can’t be altered to only include a specific subset of users, just change the “get-adobject” to match.

What sets this script apart from all the others you can find out there on the Internet is that this uses on-prem AD. Reason for this is that a lot of organizations out there are using their on-prem AD to sync their users and groups and not all of them have complete on-prem Exchange infrastructure to manage this so using this seemed a lot better. At least it did for us.

Of course this script writes to the console what it does every step of the way and it writes to date stamped transcript, making tracing and rollbacks easy-ish.

It won’t remove the e-mail address if it’s a primary e-mail since that may cause issues but it will flag it in red so pay attention to your console or search the transcript for “primary”.

$DateStamp = Get-Date -Format "yyyy-MM-dd-HH-mm-ss"

$Logfile = $LogFile = ("c:\temp\removing_mail_addresses-" + $DateStamp + ".txt")

start-transcript $logfile

$Mailboxes = Get-adobject -Filter {proxyAddresses -like "*contoso.com"} -Properties *

foreach($mailbox in $Mailboxes)

{

$DN = ""

$upn = ""

$DN = $mailbox.DistinguishedName

$upn = $mailbox.UserPrincipalName

write-host "Processing User $upn ..."

$i=0

while($i -lt $mailbox.proxyAddresses.Count)

{

$address = $mailbox.proxyAddresses[$i]

write-host -NoNewline "Processing mailadress $address..."

if ($address -clike "smtp:*" -and $address -like "contoso.com" )

{

write-host -ForegroundColor Yellow " removing address"

Set-ADObject -identity $dn -remove @{ProxyAddresses=$address}

$i--

}

elseif($address -clike "SMTP:*" -and $address -like "*contoso.com" ) { write-host -ForegroundColor Red " MAIL ADDRESS IS PRIMARY!" }

else { write-host -ForegroundColor Green " no action taken." }

$i++

}

write-host "---------------------------------------------------------------"

}

Stop-Transcript

As you may know, I worked for the Nordic part of the Thomas Cook Group. I was the O365 admin for a tenant with over 30,000 user accounts. And we ran the Azure AD Connect service for the entire group and had just moved to pass-through authentication with Seamless SSO. Although it was a royal pain sometimes to work in such a large company where even a minor change could take weeks to implement and get approval for from everywhere.

As you may also know, Thomas Cook Group filed for bankruptcy in October last year. And there was no advanced warning or anything about what was going to happen next. But for our part, we realised that we would (if the company survived) most likely be moving our Nordic business to a new O365 tenant so we began planning for that. Over the next few months a lot of stuff happened. The Nordic part of the group was sold off and started their own company NLTG and the old group was shutting down all parts of their business. Except the German part because they were backed by their government so they survived (more on that later).

When we got back after the Christmas break we were given a clear order to evacuate the tenant before end of February. Since we were a separate company and legal entity we were no longer entitled to share the old tenant which, even though it makes sense, pretty much lit a torch under our asses to get this done now. And we realised it wouldn’t be a pretty or a smooth operation, as I recall saying, “this is going to take a sledgehammer, not a scalpel!”. Fortunately I’m very used to sledgehammer my way to getting results. Yeap, thinking back to that SharePoint upgrade that was all over the place!

So there we were, less than 8 weeks to pull off a migration with 3,000 users, 5,500 mailboxes, 10TB of SharePoint data, 8TB of OneDrive data and 12TB of Exchange data. And this is how it went…

Identities : The building block of any good tenant is the identities. When we first planned for the migration our plan was to have a new on-prem AD that would be fed by.. well that’s irrelevant since there was no time for that. The only way forward was to use our existing on-prem AD. But the problem was that MSFT doesn’t support syncing your on-prem identities to two tenants. Why? I have no idea – I fully get how you wouldn’t want that in a production environment (since the UPN domain can only be valid in one tenant) but for a migration like this it would have solved a lot of headaches if we were allowed to do it like that. But nope, we really wanted to have Microsoft support for this. And we also had to retain our e-mail domains since we’re heavily dependant on the brand which is almost as Swedish as Ikea, at least in Sweden. So that presented us with the first big problem – pre-populating the new tenant with 3,000 user objects so we could start copying the data and when it was time to migrate and then play around with the UPN domains so the matching would work. But the first step was creating the 3,000 users as cloud only “onmicrosoft” accounts. This was done using powershell to export as much info on the users as possible (including “usagelocation” and “preferredlanguages” since we’re an org with offices from Thailand to Mexico!) and then powershell to recreate the users as closely as possible. Another step we had to take here was setting up a filter in Azure AD Connect that would only sync users to each tenant depending on the value of an extensionattribute. That way we could make sure no user was synced to both tenants at any time, although it did take alot of tinkering to get that logic working but fortunately Microsoft have documented how to do attribute filtering, so thanks for that.

Authentication: Remember how I said we’d just gone over to PTA for the old tenant? Well this little thing meant that as long as users were logging in to the old tenant (which we knew the Germany company would) we couldn’t use PTA for our users since it’s all based around a computer object in the AD forest with a Kerberos encryption key that’s tied to the tenant! So if we set up PTA for our new tenant that would change the key on the computer object and they wouldn’t be able to login anymore! So to solve this we did a “quick and dirty” setup of a temporary AD FS for our users to use based on domain. This was a surprisingly easy thing to do in Windows Server 2019 but it was an added “gotcha!” of this entire scenario!

SharePoint : The first problem with SharePoint was to determine which sites were relevant to keep and which weren’t. Our entire SharePoint was well over 20TB so we had to make sure to only copy over sites we knew were relevant to the Nordics business. But there’s no way of determining that without going through all the underlying permissions and groups to determine if “our” users are working on the site or not. It’s not like you can ask SharePoint to “give me all the sites that any user with the UPN domain @domain.se is working on”. Or maybe there is, I just didn’t have the knowledge to write that powershell at the time. Once that was done we used ShareGate to migrate all the SharePoint data. The biggest fear was that it wouldn’t be able to match the old identities with the new ones – which it did! I’m pretty sure it went by “DisplayName” to match them but we’re just very very thankful it did because that would have been a mess to sort out. The biggest issue I had with ShareGate was how unpredictable it was when it came to doing incremental copies, which was done through powershell. We split it up on 4 different servers with about 80 sites per server. Sometimes it could complete them all in 2 hours, sometimes it took 8 hours for one server, sometimes longer. During the weekend of the actual move it took well over 12 hours to complete which caused me a bit of unnecessary stress.

OneDrive : Since we already had a pretty nice “masterlist” of users that we would be migrating it was pretty easy to setup a CSV file to map “Old OneDrive -> New OneDrive” that we then used ShareGate to copy. That went pretty nicely although there were some instances of data not being copied over so we had to sort that our after the switch and people were missing a few files. Other than that the issue was the same as above – it was very unpredictable and I had to mess around with the queues on the weekend of the switch. We had one incident of a users OneDrive being almost empty but looking back at the old OneDrive is was empty too. So our theory there is that his OneDrive client must’ve been paused so we had to send that computer to the lab for data recovery – but that’s not ShareGates fault one bit!

Exchange : Oh joy! I was in charge of the Nordics business moving from on-prem to Online 3 years ago so I wasn’t looking forward to another move at all. After doing a quick check around for what tool to use (with our extremely limited budget – our company had gone bankrupt and we were still getting back on our feets!). It ended up being CodeTwo which was by far the cheapest alternative. But as the saying goes “you get what you paid for” and in this instance we paid for a software to move data from Mailbox A in Tenant X to Mailbox A in Tenant Y. And it did that job without much of an issue. There were still a lot of things to sort out around the move (like transport rules, conference rooms) but the big issue was just moving all the data. The biggest issue I had with that software was that they didn’t have a CSV import function when moving tenant->tenant! When moving on-prem -> tenant that wasn’t an issue, but tenant -> tenant, well the only way to enter a mailbox was to actually manually enter a mailbox! So we spent days entering 5,500 mailboxes and matching them with their new mailbox. A simply CSV import would’ve saved us days of work on this. My next issue with the software was when we were up to about 800 mailboxes per server on 7 servers, that really slowed the UI down. At the end it was so slow that when you started a queue for a incremental copy the UI would stop responding and you didn’t even know it was working until it was done and it just popped alive again.

Teams: Now Teams was the most interesting bit. Because Teams is based on so many technologies it was difficult to do a proper Teams migration. No matter how far we looked we just couldn’t find a tool that would migrate Teams with the channel/chats that also took the entire underlying SharePoint site! If you had other document libraries or data on the SharePoint site, then that was lost if you migrated the Team. But if you migrated the SharePoint site you will have lost the data in Teams that wasn’t in the default document library! So we made the choice of migrating the SharePoint sites since noone should have have posted anything business critical in a chat in a channel in Teams. Fortunately ShareGate comes with the ability to recreate O365 groups so all the groups got recreated and we only had to make the ones that were Teams into Teams manually, that was it. But it was a bit of a “unexpected behaviour” for ShareGate when it came to legacy sites (that were migrated from on-prem) that now had an O365 group, it simply wouldn’t recognise them as O365 groups or O365 Group sites and created them as legacy sites in the new tenant regardless. But that was easy enough to handle afterwards.

Licenses: This was another headache but fortunately not mine! Since our old license agreement with Microsoft was tied to our old company we couldn’t use that. And since our company was brand new we had no credit score anywhere so Microsoft couldn’t just hand us 3,000 licenses and hope we’d pay. After a lot of back and forth we managed to get the licenses in place well enough to start the migration and begin copying all that data. But there was still the matter of support contract with Microsoft. There was alot of options floating around to try different support alternatives but in the end we agreed on a premiere support deal with Microsoft. Even though the paperwork got sorted and we were told on Friday January 31st that everything was done and we now had premiere support with MSFT it turns out that like a lot of things in O365, sometimes it can take a day or two for the wheels to turn and you’ll see how critical this became for us.

Additional headache: One headache we had was that we’re not only running a normal business, we’re also running an airline. And the pilots must be able to check their e-mail for any notices and warnings from the aviation authorities before takeoff. This may include stuff like “this aircraft model isn’t flight worthy so don’t fly this aircraft model” and “Iran just shot down a civilian aircraft, avoid their airspace”. Things like that is absolutely critical for the pilots to check for, so saying “e-mail will be down for a day” is completely unacceptable from that perspective. And we were supposed to retain all the e-mail domains, and a domain can only exist in one tenant at a time. So we had to figure out a way to handle this and move their accounts and e-mail domain as quickly as possible to avoid any flight delays because their e-mails isn’t working. (spoiler – their email was down for 90 minutes)

The plan: So the best plan we came up with was to start an incremental copy of all the SharePoint/OneDrive data first thing on the morning of Saturday February 1st. Then at about 18:00 CET we’d set automatic forwarding on everyone’s mailbox in the old tenant that would forward every mail to their new mailbox with the “onmicrosoft” address. That way we were guaranteed no mail would go missing in case of bad timings. Then we did an incremental copy of all mailboxes. We had done this in plenty of tests and it would only take 2 hours so we planned to start with the first most important domain for our airline at 21:00 CET, then when that was done continue with the largest domain we had (with about 800 users) and work our way through our list of about 10 domains.

The switch consisted of alof of steps since we weren’t allowed to sync an on-prem object to two tenants.

But… “no plan of operations extends with any certainty beyond the first contact with the main hostile force“.

How it played out: I woke up early on Saturday (at about 5) to start incremental copy of all the SharePoint / OneDrive data. Unfortunately Sharegate was a bit unpredictable in it’s behaviour so I had to move sites around in the queues to make it before 18:00 but make it I did. Then I ran the powershell to set the automatic forwarding and started the incremental copy of the mail. The team (4 engineers, 1 external SME/contractor and the project manager) met up at the office at about 20:00 in the evening for pizza and a last “go-no go” check for everything. And at 21:00 I started with our airline domain And by 22:30 it was all done, every user had the proper UPN, licens, login everything was good to go. And that’s when it started – the operations team in our airline said they couldn’t access their emails in the Outlook app on their phones or computers. We had ofcourse verified that it worked through the O365 portal so we knew everything worked. After troubleshooting this for about an hour we decided to log a Severity A case with Microsoft (at 23:30) and one of us would work on this case and the rest continue working with the other domains. That work with the other domains came to a halt for one of our largest domains which wasn’t removed from the ole tenant. No user had it in their UPN, no recipient used the domain, nothing. But the domain never got deleted, it was stuck in “pending”. So another severity A case to Microsoft (at about 00:30) and we proceeded with the next domain. At about 02 in the morning that domain did eventually go away by itself and we thought everything was good when our airline operations team (who’s responsibility it is to keep the planes flying, so I have the utmost respect for them and their challenges!) wanted us to do a rollback and try again at a later date. We spent about an hour with them arguing than a rollback wouldn’t solve this issue and we didn’t have time to try again next week since we had to evacuate the old tenant. Another argument was that this is a client issue and the mails are accessible through the web and we can get Microsoft to solve the client issue after. Fortunately we were able to convince them to proceed but now we’re at 03 int he morning and I had been working for 22 hours straight and I had no energy left in me so I tried sleeping for a bit. After 2 hours I woke up to cheers because now the Outlook clients in our airlines started to work so the biggest issue we had was solved and we could keep on with the remaining few domains.

At about lunch on Sunday morning we were all done with all domains and users and started to do the clean up job of on-prem systems no one knew about that had a EWS configured to the old tenant that no longer worked etc and that continued for days.

So where was Microsoft in this? As I mentioned our premier support deal with them got activated on the day before the switch. But that hadn’t replicated to all systems and instances of those systems in Microsoft so there was a big challenge even to get them to accept a SevA case from us. But we had two cases that managed to register as SevA cases with them during this switch and they weren’t helping us with either of them. The first case was regarding Outlook clients no longer being able to connect. Many blogs on many sites on the Internet says “when moving to a new tenant this may take a few hours”. In our case we were already up to hours and when creating new users we were able to connect to them immediately, but not the ones that had been switched and we didn’t see a reason why. This started to resolve itself after about 6 hours. And it wasn’t thanks to Microsoft doing anything on their side because they called me at about 5:30 on the sunday morning to say “sorry but we still haven’t been able to find any engineer to work on this case”. The other case we had with them was regarding the domain that wasn’t getting deleted. The called back on that issue also after it was resolved to ask us to verify the domain name because according to what they were seeing the domain was no longer in the old tenant so they obviously hadn’t done anything on their end in that case either.

Lessons learned:

I recently came up against an issue that I eventually needed MSFT to investigate and come up with a solution for and in the hopes of saving someone else the trouble, I’m going to go ahead and write a small blog post about it.

Symptom: The issue was that users in Skype For Business Online were stuck in the “Disabled” state and with the Directory Status “On-premises (hybrid)”. Nothing I did changed that. But other users belonging to the same domain (“contoso.com”) were enabled without issues?

After analyzing alot of the users I found that the attribute “HostingProvider” was set to “SRV:” for the users that it didn’t work for, but “sipfed.online.lync.com” for the ones that it did work for.

Root cause: The root cause for this is that when a user is provisioned in Skype For Business Online, “O365” checks in real time for a DNS record “lyncdiscover” of the domain of the user, in this case “lyncdiscover.contoso.com”. If the DNS record is set to “webdir.online.lync.com” then the user will be provisioned as an “Online” user and enabled. But if the DNS record is something else, then it will assume the user exists in an on-premise environment and it will be provisioned as an “On-premises (hybrid)” user and disabled. And in our case, sometime during the adoption of O365 services this DNS entry lost the trailing period (“.”), so for O365 it looked like “webdir.online.lync.com.contoso.com” and that’s why it assumed they were on-prem!

The Fix: Fixing the DNS entry is easy enough so that should solve it, right? Unfortunately this check happens when a user is provisioned and then it’s set. And the only way to trigger an update is to delicense the user (that is “removing Skype For Business license”), wait a few hours and then license the user again! That will trigger a provisioning process again and O365 will see the correct DNS setting and the user will be “Enabled”!

Thank you MSFT for the details regarding this process!

SCENARIO

You’re managing a large O365 tenant with AD FS service or multiple AD FS services and those certificates are expiring and needs replacing.

PROBLEM

The main problem is that there is no good way of telling ADFS to do something on only the domains that it actually is federated with, it’ll just assume it has them all. This may lead to some complications.

SOLUTION

I wrote this little script because I wanted to know

a) the domains that were federated to this ADFS service

b) the domains that were NOT federated to this ADFS service

c) the domains that hadn’t refreshed the signing certificate.

This little script, which must be executed on the ADFS service in an admin powershell, will first check the URL of the local ADFS service and then go through every domain in your tenant to see which match, and if they match will check the certificate. That way you know exactly which domains to look at.

It spits it all out in the console but also in 3 files in the c:\temp directory. And if you feel brave enough, you can uncomment the “update-federation” command to run that command.

Also it assumes you are already connected to the MSOL Service.

Start-Transcript c:\temp\msolfederation_check_log.txt

# Getting the local AD FS server address:

$stsaddress = ""

$stsaddress = (Get-AdfsEndpoint -AddressPath /adfs/ls/).FullUrl

$stsaddress = $stsaddress -replace "https://","" -replace "/adfs/ls/",""

write-host "The local AD FS address is $stsaddress"

$federateddomains = Get-MsolDomain | where{$_.authentication -eq "Federated"}

foreach($feddomain in $federateddomains)

{

# Clearing the variables

$certmatch = ""

$feddomainname=""

$fedinfo=""

$fedinfosts=""

# Setting the domainname of this domain

$feddomainname=$feddomain.name

if($feddomain.rootdomain)

{

write-host -ForegroundColor Yellow "$feddomainname is a subdomain, skipping check"

$feddomainname >> "C:\temp\FedDomains - Subdomains.txt"

}

else

{

write-host -NoNewline "Checking Federation for domain $feddomainname..."

# Getting federation information for this domain

$fedinfo = Get-MsolFederationProperty -domainname $feddomainname -ErrorAction SilentlyContinue

if($fedinfo)

{

# Getting the STS info for this domain that can be in either two of the resulting array

if($fedinfo.source[0] -eq "Microsoft Office 365") { $fedinfosts = $fedinfo.tokensigningcertificate[0].subject }

if($fedinfo.source[1] -eq "Microsoft Office 365") { $fedinfosts = $fedinfo.tokensigningcertificate[1].subject }

# Now we check if the thumbprints match

if($fedinfo.tokensigningcertificate[0].Thumbprint -eq $fedinfo.tokensigningcertificate[1].Thumbprint) { $certmatch = "1" } else { $certmatch = "" }

if($fedinfosts -like "*$stsaddress*")

{

write-host -NoNewLine " Federated to "

write-host -NonewLine -foregroundcolor Green "this AD FS service"

if($certmatch -eq "")

{

write-host -ForegroundColor Red " but certificates do not match!!!"

# You could try to execute the below command to update the Federation information # if you feel safe in this.

# Update-MsolFederatedDomain -DomainName $feddomainname -SupportMultipleDomain

$feddomainname >> "C:\temp\FedDomains - ADFS Match - Cert Mismatch.txt"

}

else

{

write-host -ForegroundColor Green " and certificates do match."

$feddomainname >> "C:\temp\FedDomains - ADFS Match - Cert Match.txt"

}

$feddomainname >> "C:\temp\FedDomains - domains federated to this ADFS.txt"

}

else

{

write-host -NoNewLine " Federated to "

write-host -foregroundcolor Yellow "another AD FS instance"

$feddomainname >> "C:\temp\FedDomains - ADFS Mismatch.txt"

}

}

}

}

Stop-Transcript

SCENARIO

When executing SharePoint Online scripts you need to be connected to your “admin” site or the script will just fail if you’re not.

PROBLEM

When writing a script you can’t assume that you’re already connected to your SPO tenant and unlike the “msolservice” connect call you need to specify your “admin” URL which can be quite long. But sometimes you’re already connected in the Powershell session.

SOLUTION

Writing this little thing in the start of your script will check if you’re connected to the admin site and if not will call the connect-sposervice command with the URL already set.

# First we reset the sitecheck to avoid having an old result

$sitecheck=""

# This is the address of your SPO admin site

$adminurl = "https://[your tenant name]-admin.sharepoint.com"

# Now we try to get the SPOSITE info for the admin site

Try { $sitecheck = get-sposite $adminurl }

# If we get this server exception for any reason, the service isn't available and we need to take action, in this case

# write it to the console and then connect to the SPO service.

Catch [Microsoft.SharePoint.Client.ServerException]

{

Write-Host -foreground Yellow "You are not connected!"

connect-sposervice $adminurl

}

Recently ran into an issue where a user in the on-prem AD had been deleted unintentionally and in the next sync his user went along with his mailbox.

Googling around I found a helpful article how to best go about restoring this. It’s basically about creating a new on-prem users and setting the new GUID on the recovered AzureAD user so AzureAD Connect can tie them together.

However, when trying to set the new “ImmutableID” with “set-msoluser” I got this error:Set-MsolUser : You must provide a required property: Parameter name: FederatedUser.SourceAnchor

Took alot of Googling to realise what was wrong! The issue here is that you can’t set a new ImmutableID on a user in a Federated domain! So the trick here was to change the user to an “onmicrosoft” user, change the ImmutableID and then changing it back to the federated domain!

# Checking the original ImmutableID

get-msoluser -UserPrincipalName [email protected] | select *immutableid*

# Changing it to a "onmicrosoft" UPN

set-MsolUserPrincipalName -UserPrincipalName [email protected] -NewUserPrincipalName [email protected]

# Setting a new Immutable ID from on-prem AD

set-MsolUser –UserPrincipalName [email protected] -ImmutableId "Z/-XGv2W4kWPM1mR/ddSdn!)"

# Check that the change was applied

get-msoluser -UserPrincipalName [email protected] | select *immutableid*

# Changing it back to the original UPN

set-MsolUserPrincipalName -UserPrincipalName [email protected] -NewUserPrincipalName [email protected]

# Checking that the UPN is now correct and the correct ImmutableID is applied

get-msoluser -UserPrincipalName [email protected] | select *immutableid*

Hope that saves someone some headache.

SCENARIO

You want to know how many users are using SMS for MFA or mobile app to change user behavior to drive adoption of the MFA app.

PROBLEM

By default when users enrol with MFA they click “Next” all the way and end up with SMS authentication, regardless of what information we provide them with. And the way Microsoft stores this information isn’t very friendly for us to see this easily.

SOLUTION

I wrote this to demonstrate to management that users indeed doesn’t read the e-mails sent out to them which detailed that they should use “Mobile app” verification and what actually happened was they just clicked “Next” all the way and ended up with SMS authentication. In our case we ended up with about 2% of users chosing the application!

$phoneappnotificationcount = 0

# Setting the counters

$PhoneAppOTPcount = 0

$OneWaySMScount = 0

$TwoWayVoiceMobilecount = 0

$nomfamethod = 0

# Getting all users

$allusers = Get-MsolUser -all

# Going through every user

foreach($induser in $allusers)

{

# Resetting the variables

$methodtype = ""

$strongauthmethods = ""

$upn = ""

$strongauthmethods = $induser | select -ExpandProperty strongauthenticationmethods

$upn = $induser.userprincipalname

# This check is if the user has even enrolled with MFA yet, otherwise we +1 to that counter.

if(!$strongauthmethods) { $nomfamethod++ }

# Going through all methods ...

foreach($method in $strongauthmethods)

{

# ... to find which is the default method.

if($method.IsDefault)

{

$methodtype = $method.MethodType

if($methodtype -eq "PhoneAppNotification") { $phoneappnotificationcount++ }

elseif($methodtype -eq "PhoneAppOTP") { $PhoneAppOTPcount++ }

elseif($methodtype -eq "OneWaySMS") { $OneWaySMScount++ }

elseif($methodtype -eq "TwoWayVoiceMobile") { $TwoWayVoiceMobilecount++ }

# If you want to get a complete list of what MFA method every user got, remove the hashtag below

# write-host "User $upn uses $methodtype as MFA method"

}

}

}

# Now printing out the result

write-host "Amount of users using MFA App Notification: $phoneappnotificationcount"

write-host "Amount of users using MFA App OTP Generator: $PhoneAppOTPcount"

write-host "Amount of users using SMS codes: $OneWaySMScount"

write-host "Amount of users using Phone call: $TwoWayVoiceMobilecount"

write-host "Amount of users with no MFA method: $nomfamethod"

This is going to be a wall of text. And 99% of the people I know aren’t even interested. But I’m writing this on behalf of every other SharePoint admin out there who are unfortunate enough to discover just how easy SharePoint is to break!

Little background: I’ve been working with SharePoint since about 2005. Not that long for some but long enough to know that after a few years of use a SharePoint farm has a few quirks in it and it’s a good idea to upgrade it. And you never upgrade an existing farm, you always start with a new fresh one and import all data! Now one of my jobs (!) is managing a 30k user corporate SharePoint – a business critical solution since all documentation are in there. And not only that, our entire BI solution is in there as well, complete with “PowerPivot” and “Reporting Services for SharePoint”… No pressure!

So now it was time to upgrade it from SP2013/SQL2014 to SP2016/SQL2016, including all BI solutions. We’ve gone through a “dev” environment, a “test” environment and even a “preprod” environment and everything went surprisingly well. There was ofcourse the usual glitches getting the BI features to work (and the S2S cert trust that is required for Excel with data source connection files now that Excel service moved out of SP to OOS!). But anyway, the preprod farm was so great that the plan was to take it into production. Our BI team didn’t see a big problem doing that in an afternoon on a weekday, whereas for me the biggest problem was the 1.5TB of data that needed to be shuffled and upgraded. And “even the best laid plans”, you know. I also knew that one of the biggest issue was network infrastructure which for a global company is so complex that the best way forward was to swap IP addresses of the servers so we wouldn’t have to change DNS or static IP routes anywhere, we’d just solve it at the load balancer level. So I managed to get a whole Saturday from the business to have SharePoint offline, but no more. After all, all documentation is in there!

So now it was time to upgrade it from SP2013/SQL2014 to SP2016/SQL2016, including all BI solutions. We’ve gone through a “dev” environment, a “test” environment and even a “preprod” environment and everything went surprisingly well. There was ofcourse the usual glitches getting the BI features to work (and the S2S cert trust that is required for Excel with data source connection files now that Excel service moved out of SP to OOS!). But anyway, the preprod farm was so great that the plan was to take it into production. Our BI team didn’t see a big problem doing that in an afternoon on a weekday, whereas for me the biggest problem was the 1.5TB of data that needed to be shuffled and upgraded. And “even the best laid plans”, you know. I also knew that one of the biggest issue was network infrastructure which for a global company is so complex that the best way forward was to swap IP addresses of the servers so we wouldn’t have to change DNS or static IP routes anywhere, we’d just solve it at the load balancer level. So I managed to get a whole Saturday from the business to have SharePoint offline, but no more. After all, all documentation is in there!

That Saturday was last Saturday April 14th. I got up at 4am to start shuffling the data. By 7 that was done and I started upgrading the database with the normal “mount-spcontentdatabase”. Here was my first mistake (in hindsight). I had already written a script to do this, but that’ll come later. By 10 everything was loaded, upgraded and I proceeded to change IP addresses around and change it in the load balancer, then go through my long list of checks that normal user SP functionality works while our BI team were updating all of their things.

After lunch we had a “go/no-go” meeting and everything looked good. I also noticed at this point I had a case to create a new SharePoint site for a project, something I actually hadn’t tested since that’s not a “normal user SP functionality”. And that’s when the shit hit the fan! What I had missed thanks to my scripting was that one of the content databases had failed to upgraded and was now corrupt and when I wanted to create a new site it did it in that database since it was the “least used” and hence the error. “No problem, plan a) I’ll just delete this database”, right? Nope, SharePoint wouldn’t have it because the database wasn’t attached since it was corrupt. Yet I could see the sites in that database listed with get-spsite?

Tried a few things but couldn’t recover so I decided plan b) remove the web app and create a new and re-import/re-mount this corrupt DB, all other DB’s were already upgraded successfully so not a big operation. Well, SharePoint wouldn’t have that either – it couldn’t dismount this database because it was corrupt so I couldn’t remove the webapp! I was completely stuck with a broken web app that I couldn’t remove because of a content database that wasn’t mounted?!

Tried a few things but couldn’t recover so I decided plan b) remove the web app and create a new and re-import/re-mount this corrupt DB, all other DB’s were already upgraded successfully so not a big operation. Well, SharePoint wouldn’t have that either – it couldn’t dismount this database because it was corrupt so I couldn’t remove the webapp! I was completely stuck with a broken web app that I couldn’t remove because of a content database that wasn’t mounted?!

So plan C) rename that webapp with the corrupt database and give it a nonsense URL so I could create a new web app with the proper URL. That seemed to work but when I tried importing a new backup of this content DB it didn’t import any of the site collections! .. digging around I could see that the sites in the broken webapp, with the new nonsense URL, still had the original URL! It couldn’t update them because… there was no content DB attached to them! I dug around in SharePoint Manager (which was designed for 2013 I know) but it kept crashing when I clicked any of the sites in the broken webapp.

So there I was with a broken web app with a corrupt contentdb with sites occupying the URL I needed to create our proper web app. Came to the conclusion that the config db was pretty much fucked at this point at now we’re at 2pm. Best option available to me at this point was calling Microsoft premiere support case with a severity A case. I’m pretty sure if I had gone for that they would have looked at it, made the same determination as me and said “since this is a farm not yet in production, I’d say the best way forward is to recreate the farm”. During that time our BI would be in SharePoint 2016 but the “big” web app in 2013 on separate IP addresses! God knows how the network would handle that and getting the engineers in India to change firewall routes in less than a week wasn’t that likely. Because rebuilding a new farm in production would take at least a week, right?…

After clearing it with my supervisor that this was indeed the best way to solve it NOW! All other options led to some unknown hellhole – going back was always a possibility no matter what.

I got a green light and Red Bull at about 3pm …

Basically I had done at least a weeks work in 7 hours and all in production environment!

The “Done!” mail got out at 9pm! Now I’m not one to brag, but any SharePoint admin must be impressed by that! Hell, even Scotty would be proud! I spent a few hours on Sunday cleaning up the mess and sorting out the BI issues (since this was a new farm there were a lot of BI configuration that was lost) but by Sunday 6pm everything was fully operational and I promptly went to be and slept like a baby. And one of the first things to hit me on Monday morning was “why is Managed Metadata empty” because yeah, in my haste I forgot that little thing ?

The “Done!” mail got out at 9pm! Now I’m not one to brag, but any SharePoint admin must be impressed by that! Hell, even Scotty would be proud! I spent a few hours on Sunday cleaning up the mess and sorting out the BI issues (since this was a new farm there were a lot of BI configuration that was lost) but by Sunday 6pm everything was fully operational and I promptly went to be and slept like a baby. And one of the first things to hit me on Monday morning was “why is Managed Metadata empty” because yeah, in my haste I forgot that little thing ?

How was your weekend?

Yeah, I don’t know why but NASA has a special place in my heart. Maybe cause I’m a tech nerd, a scifi geek or just like to have my shit together, or maybe it’s some visionary part of me I don’t know I have but I just love it. In everything from fictional NASA in “Contact” to proper NASA in “From the Earth to the Moon” it’s an inspiration. That’s one of the reasons I made a point at going to Kennedy Space Centre when I was in Florida and one of the reasons that trip was an awesome success to me. And one of the reasons I didn’t have a problem opening up my wallet in the gift shop!

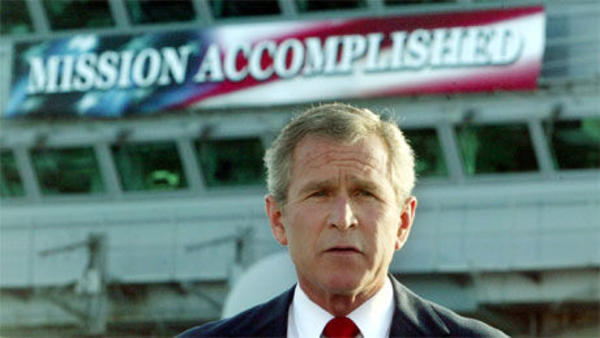

And yesterday I saw the movie/documentary “Mission Control” – about those 20-something engineers that made up the mission control team. I really recommend catching it on Netflix or renting it on bluray cause it’s awesome. One of the things that surprised me was the interview with one of the engineers who was there in the trench for a lot of the Apollo missions, even the moon landing, that said he regretted doing it because of the toll it took on his family! I mean, it’s one of the things I can only dream of doing so hearing that makes you wonder what really is important – making a mark in history or being with your family.

Another NASA “merchandise” I can recommend is the book “View From Above” by austronaut and “photographer in space” Terry Virts. You can get it from Amazon or something but it’s well worth it. Not only because of the awesome pictures that makes you feel tiny and insignificant but also because of the stories he has to tell.

Another NASA “merchandise” I can recommend is the book “View From Above” by austronaut and “photographer in space” Terry Virts. You can get it from Amazon or something but it’s well worth it. Not only because of the awesome pictures that makes you feel tiny and insignificant but also because of the stories he has to tell.

SCENARIO

You’re managing a SharePoint Online environment and you want to know where external sharing is enabled.

PROBLEM

The problem is that Microsoft hasn’t fully launched a way of getting a good overview of this where you can change it. When you first enable sharing for example alot of sites will have it turned on by default etc. The new SharePoint Admin center has the ability to add the “external sharing on/off” column in the list of sites but that is very limited and you can’t enable or disabled it.

SOLUTION

Fortunately there is a very good attribute that you can retrive to get this and alter the external sharing setting called “SharingCapability”.

So using that you can get a list of all sites and what the status is of them, or you can filter for all that have it enabled or disabled:

get-sposite | select url, SharingCapability # All sites with their URL and SharingCapability

get-sposite | Where-Object{$_.SharingCapability -eq "ExternalUserSharingOnly"} # External user sharing (share by email) is enabled, but guest link sharing is disabled.

get-sposite | Where-Object{$_.SharingCapability -eq "ExistingExternalUserSharingOnly"} # External user sharing to existing Azure AD Guest users

get-sposite | Where-Object{$_.SharingCapability -eq "ExternalUserAndGuestSharing"} # External user sharing (share by email) and guest link sharing are both enabled

get-sposite | Where-Object{$_.SharingCapability -eq "Disabled"} # External user sharing disabled

Once you have that you can script it so you can disabled external sharing on all sites by doing this:

$allsites = get-sposite | Where-Object{$_.SharingCapability -ne "Disabled"}

foreach($specificsite in $allsites) { Set-SPOSite $specificsite.url -SharingCapability Disabled }

The reason you want to do this in a “foreach” is there will be a site or two you may get an error that you can’t change the setting, so that would exit the command on that error.

SCENARIO

You’re asked how many users has what storage quota/limit in Exchange

PROBLEM

The problem originates from MS saying that the standard Exchange Online mailbox is 100GB in size. But some of our users are reporting they “only” have 50. I thought this was a minority if people so not a big thing. My manager disagrees.

SOLUTION

I began writing quite a complicated powershell for this but when I looked at it after a coffee break I said to myself “there’s gotta be a better way”. And sure enough it is. I’ve simply never used the “group-objects” function before! But now I can clearly get a report that’s just 3 lines long!

get-mailbox -resultsize Unlimited | Group-Object -property ProhibitSendReceiveQuota

SCENARIO

You’re trying to install SharePoint 2016 on a Windows 2016 server and thinks just aren’t going well.

PROBLEM

To be honest I don’t know how else to explain the problem in any other way than Microsoft’s Windows Server 2016 team was in a feud over lunchboxes with the SharePoint 2016 devs because there is no other way to describe the complete incompatibility between the two!

SOLUTION

I’d say “Google it!” but that’s probably what got you here in the first place!

The first problem is the prerequisite installer that can’t configure Windows IIS role or download things. Fret not for there is plenty of help to find. When first running the prereq you’ll probably get this error: “Web Server (IIS) Role: configuration error”. To configure the IIS use this Powershell :

Add-WindowsFeature Web-Server,windows-identity-foundation,`NET-Framework-45-ASPNET,Web-Mgmt-Console,Web-Mgmt-Compat,Web-Metabase,Web-Lgcy-Mgmt-Console,Web-Lgcy-Scripting,Web-Mgmt-Tools,Web-WMI,Web-Common-HTTP,NET-HTTP-Activation,NET-Non-HTTP-Activ,NET-WCF-HTTP-Activation45 -Source 'Q:\sources\sxs'

Make sure to edit the source file to the Windows Server 2016 ISO!

The next place you should look at is this blog by the Microsoft Field Engineer Nik. Although be careful about some of his links as those are outdated and replaced with new versions, although downloading the version he’s linking will still work. He even provides a script that will run the Powershell to configure everything. Why this isn’t on the SharePoint 2016 ISO is beyond me!

But even when downloading all of that and installing it properly I was still faced with this error when trying to setup the farm: “New-SPConfigurationDatabase : One or more types failed to load. Please refer to the upgrade log for more details.“. Going through the install log I found this: “SharePoint Foundation Upgrade SPSiteWssSequence ajywy ERROR Exception: Could not load file or assembly ‘Microsoft.Data.OData, Version=5.6.0.0, Culture=neutral, PublicKeyToken=31bc3856cd365e35’ or one of its dependencies. The system cannot find the file specified.“

It seems that the WCF prerequisite file when installed using the Powershell method of manually downloading and installing it! Fortunately the quick fix is to find the file “WcfDataServices.exe” in your profile directory (i.e NOT the one you downloaded!), running it and choosing “Repair”. Only then did SharePoint 2016 install properly!

SCENARIO

You’re managing a large O365 tenant and you want to make sure there are no users that have multiple licenses assigned.

PROBLEM

The original problem is that you actually can assign a user with a F1, E1 and E3 license and end up paying three times for a user! Next problem comes with how license information is stored and retrieved with Powershell.

SOLUTION

Here is little code that will read out all your users and go through each one to make sure they don’t have more than one of the licenses assigned. It should work as long as Microsoft doesn’t change the _actual_ names for licenses!

$allusers = Get-MsolUser -All

foreach($msoluser in $allusers)

{

$userpn = $msoluser.userprincipalname

$userlicense = Get-MsolUser -UserPrincipalName $userpn | select Licenses

if($userlicense.Licenses.AccountSkuId -like "*ENTERPRISEPACK*" -and $userlicense.Licenses.AccountSkuId -like "*DESKLESSPACK*" -and $userlicense.Licenses.AccountSkuId -like "*STANDARDPACK*")

{

write-host -Foregroundcolor Red "$userpn has both E1 and E3 and F1"

}

elseif($userlicense.Licenses.AccountSkuId -like "*ENTERPRISEPACK*" -and $userlicense.Licenses.AccountSkuId -like "*STANDARDPACK*")

{

write-host -Foregroundcolor Yellow "$userpn has both E3 and E1"

}

elseif($userlicense.Licenses.AccountSkuId -like "*STANDARDPACK*" -and $userlicense.Licenses.AccountSkuId -like "*DESKLESSPACK*")

{

write-host -Foregroundcolor Yellow "$userpn has both E1 and F1"

}

elseif($userlicense.Licenses.AccountSkuId -like "*ENTERPRISEPACK*" -and $userlicense.Licenses.AccountSkuId -like "*DESKLESSPACK*")

{

write-host -Foregroundcolor Yellow "$userpn has both E3 and F1"

}

}

The script can ofcourse be enhanced to write a log or even mail a log to an admin if you want.

SCENARIO

You’re handed a list of e-mail address for mass mailing from HR and they need to verify that all e-mail addresses are valid and won’t bounce “like last time”.

PROBLEM

There are a few problems with this. One is the fact that not all e-mail addresses are the primary e-mail address and won’t show up in a normal search.

SOLUTION

I put this little script together that will first connect to your MS Online tenant, then read all MSOL users into an array, import the CSV file containing the employees, go through each row and check that the e-mail address from the file in the column “employeeemailaddress” exists as a proxy address on at least one user. If not it writes out the e-mail to a log in c:\temp. Nothing too advanced, just a few things put together to achieve a very, VERY tedious task when you get a list of 10.000 e-mail addresses!

This can also be modified to check if any other attribute exists or not on users if you want to, it was just for this scenario that I had to check e-mail addresses! It can also be modified to read out the local AD and not the Azure AD, ofcourse.

Please comment out the first two lines if you run this more than once in a Powershell window since the list of users is already in the variable and reading out all MSOLUsers can take a very long time!

connect-msolservice

$allusers = Get-MsolUser -All

#Prepping the logg

$DateStamp = Get-Date -Format "yyyy-MM-dd-HH-mm"

$LogFile = ("C:\temp\invalid_emailaddresses-" + $DateStamp + ".log")

# Defining the log function

Function LogWrite

{

Param ([string]$logstring)

Add-content $Logfile -value $logstring

}

$csv = import-csv C:\temp\emailaddresses.csv

foreach($csvobject in $csv)

{

$emailuser = ""

$emailaddress = $csvobject.employeeemailaddress

Write-Host -ForegroundColor Yellow "Looking up user with e-mail $emailaddress"

$emailuser = $allusers | where {$_.proxyaddresses -like "*$emailaddress*"} | select DisplayName

if(!$emailuser.displayname)

{

LogWrite ("Could not find user with e-mail address $emailaddress")

write-host -ForegroundColor Red "Could not find user with e-mail address $emailaddress"

}

else

{

write-host -ForegroundColor Green "User found, e-mail address is good"

}

}

SCENARIO

You’re getting some error that a specific e-mail address can’t be or send mails. But you have no clue about which user/mailbox is the owner of this specific e-mail address

PROBLEM

Most of the times this isn’t a problem, the Exchange Management Console or EOL Admin Center will do the trick. But sometimes it can be a bit tricky if the e-mail address is to say a public folder, which isn’t scoped in the search.

SOLUTION

This quick little powershell will do the trick for you to find it:

Get-Recipient -resultSize unlimited | select name -expand emailAddresses | where {$_.smtpAddress -match "*EmailAddressToSearchFor*"} | Format-Table name, smtpaddress

Credit goes to Fulgan @ ArsTechnia for the post here.

SCENARIO

For some reason, probably money, you can’t use a proper backup solution for your farm. So you want to use versioning as a cheap mans backup.

PROBLEM

Going through every document library in every site in every site collection in every application to enable versioning isn’t possible. And there is no way to specify in Central Administration or declare a policy to enforce this.

SOLUTION

This powershell script will do the trick for you. It’s written to enabling versioning for an entire web application (with easy alteration it can be scoped to a specific site/site collection). What’s neat about this is that it will not change settings on the document libraries that already have it enabled! It will not enable minor versioning, but you can just enable that if you want.

As always, use on your own risk and test in a test environment first and then scope it to a test site collection in production farm!!

Add-PSSnapin Microsoft.SharePoint.PowerShell -erroraction SilentlyContinue

$webapp = "ENTER URL TO WEB APPLICATION"

$site = get-spsite -Limit All -WebApplication $WebApp

foreach($web in $site.AllWebs)

{

Write-Host "Inspecting " $web.Title

foreach ($list in $web.Lists)

{

if($list.BaseType -eq "DocumentLibrary")

{

$liburl = $webapp + $list.DefaultViewUrl

Write-Host "Library: " $liburl

Write-Host "Versioning enabled: " $list.EnableVersioning

Write-Host "MinorVersioning Enabled: " $list.EnableMinorVersions

Write-Host "EnableModeration: " $list.EnableModeration

Write-Host "Major Versions: " $list.MajorVersionLimit

Write-Host "Minor Versions: " $list.MajorWithMinorVersionsLimit

$host.UI.WriteLine()

if(!$list.EnableVersioning)

{

$list.EnableVersioning = $true

$list.EnableMinorVersions = $false # Set this to true if you want to enable minor versioning

#$list.MajorVersionLimit = 10 # Remove comment hashtag and set this to the max amount of major versions you want

#$list.MajorWithMinorVersionsLimit = 5 # Remove comment hashtag and set this to the max amount of minor versions you want

$list.Update()

}

}

}

}

Credit goes to Amrita Talreja @ HCL for this post which is the basis for this Powershell script.

This is a small little script I wrote for going through all administrator roles in your O365 tenant and listing out the members of each. This can be handy if you feel like you’re losing control over who has what permission in the tenant or someone says the classic “I want what he has”.

$DateStamp = Get-Date -Format "yyyy-MM-dd-HH-mm"

$LogFile = ("C:\temp\get_all_msolrolemembers-" + $DateStamp + ".csv")

# Defining the log function

Function LogWrite

{

Param ([string]$logstring)

Add-content $Logfile -value $logstring

}

LogWrite ("msolrole;email;displayname;islicensed")

$msolroles = get-msolrole

foreach($role in $msolroles)

{

$rolemembers = get-msolrolemember -roleobjectid $role.objectid

foreach($rolemember in $rolemembers)

{

LogWrite ($role.name + ";" + $rolemember.emailaddress + ";" + $rolemember.DisplayName + ";" + $rolemember.islicensed +";")

}

}

SCENARIO

You’re managing Office 365 for a company. You start seeing those licenses count down and you don’t know where they are going. Then it’s time to check if you have “Shared mailboxes” that are licensed!

PROBLEM

The problem here is that sometimes user mailboxes are converted to a shared mailbox. Maybe it’s an employee that left but you still want to access the mailbox, or maybe the mailbox was accidentally created as a user mailbox to begin with. And Microsoft even has a button in Exchange Online for converting to Shared mailboxes! But the problem is that button does indeed convert it – but it’s not deactivating the license! This is probably working as intended as deactivating the license has some other affects like legal hold and other services.

SOLUTION

This little command will list the mailboxes that are tagged as Shared mailboxes and lookup if their Azure AD object is licensed or not. It requires you to already have a remote PS session with Exchange Online as well as a connecting to MSOL service:

Get-Mailbox -ResultSize Unlimited -RecipientTypeDetails SharedMailbox | Get-MsolUser | Where-Object { $_.isLicensed -eq "TRUE" }

Then you may want to write something like this to automatically remove the licenses. Change “yourlicenseplan” to.. well, your license plan 🙂

Get-Mailbox -ResultSize Unlimited -RecipientTypeDetails SharedMailbox | Get-MsolUser | Where-Object { $_.isLicensed -eq "TRUE" } | foreach {Set-MsolUserLicense -UserPrincipalName $_.UserPrincipalName -RemoveLicenses "yourlicenseplan:ENTERPRISEPACK"}

Credit goes to Mohammed Wasay (https://www.mowasay.com/2016/03/office365-get-a-list-of-shared-mailboxes-that-are-accidentally-licensed/).

SCENARIO

After patching a SharePoint server with normal OS patches and reboot you are no longer able to browse to the applications or Central Admin. When looking at the log you see this error from C2WTS:

“An exception occurred when trying to issue security token: Loading this assembly would produce a different grant set from other instances. (Exception from HRESULT: 0x80131401).”

This was on a normal SharePoint Foundation 2013 server (build 15.0.4569.1506)

PROBLEM

If you check this TechNet forum post it seems to be related to “third party monitoring tools”. Unfortunately, SCOM is considered a third party tool in this case. And as it happens, we have just upgraded to SCOM2016!

SOLUTION

According to the TechNet forum post (and this official MS post) you should update or disable any third party monitoring tool. So, uninstalling the SCOM monitoring agent, rebooting, reinstalling it with “NOAPM=1” parameter will solve the issue (atleast it did for me).

However, if that it not an option (sometimes you can’t just reboot a critical server!), disabling Load Optimization does work, even if means your SharePoint is now unsupported. So I’m posting this for all us “it needs to be fixed now and I can’t find, update or disable whatever DLL is causing this!”-techies! So setting these registry keys works:

HKEY_LOCAL_MACHINE\SOFTWARE\Microsoft\.NETFramework, create a new ‘DWORD (32-bit) Value’ named “LoaderOptimization” with a value of “1”.

HKEY_LOCAL_MACHINE\SOFTWARE\Wow6432Node\Microsoft\.NETFramework, create a new ‘DWORD (32-bit) Value’ named “LoaderOptimization” with a value of “1”.

But as those MS people will tell you, it really isn’t a recommended solution (which is weird since it originated from MS support!).